A team of researchers at the University of Wisconsin–Madison has developed an algorithm for identifying images of cosmic rays taken by smartphone cameras. The new algorithm was designed by graduate students Miles Winter, James Bourbeau, and Matthew Meehan, working with Professor Justin Vandenbroucke and using data from the Distributed Electronic Cosmic-ray Observatory (DECO). DECO is a smartphone application that uses the phone’s camera sensor to detect cosmic rays and other energetic particles that pass through it. Vandenbroucke and collaborators launched the project publicly back in 2014 with the vision of building a large, distributed cosmic-ray telescope using cell phones of citizen scientists around the world.

The DECO app, currently running on Android devices, takes pictures while the camera is covered to prevent light from contaminating the images. The images include tracks of subatomic particles, called muons, created when cosmic rays—most of them high-energy protons—interact with the atmosphere. Muons are similar to electrons, but heavier, and leave a straight line of lit up pixels in the sensor that scientists call a track. However, the cameras can also detect ambient radioactivity, and distinguishing muon tracks from signatures of radioactivity turned out to be a difficult process to automate.

The new algorithm uses a convolutional neural network (CNN), which is a type of machine learning algorithm widely used in computer vision problems. The CNN was trained to identify different types of DECO images and accurately identifies them more than 90% of the time, which is comparable to human performance. This is the first time that robust particle identification has been demonstrated with this data set and is the first step in performing advanced scientific and educational measurements with DECO. The algorithm may also benefit other data sets consisting of particles recorded by image sensors. The promising results are further evidence of the power of machine learning, which is becoming ubiquitous in any field that analyzes data. Justin Vandenbroucke, Principal Investigator on the DECO project, reflected that “the same computer vision technology that will enable self-driving cars is now enabling self-driving physics. It’s amazing to see computers learn to be as good as humans at identifying which subatomic particle produced each event.”

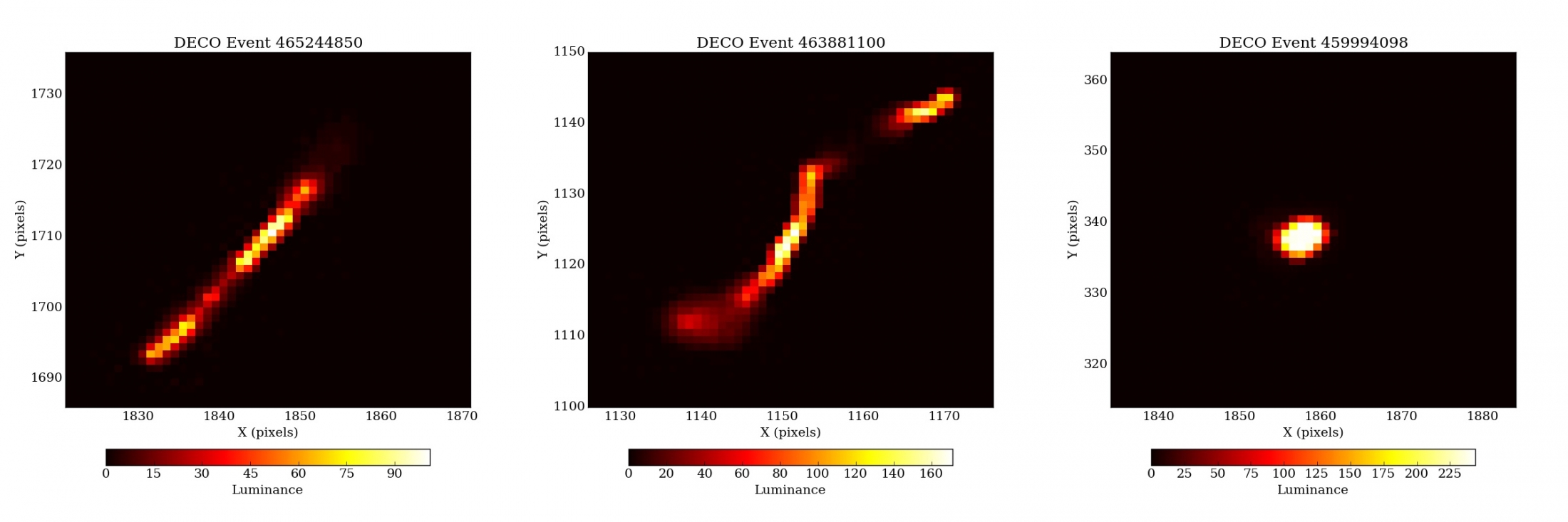

The DECO data set consists of images created when ionizing charged particles, i.e., those with enough energy to remove electrons from atoms, light up pixels they pass through as they deposit energy along their paths. The resulting patterns have four different morphologies: tracks, or straight lines, created by cosmic-ray muons; worms, created by electrons from radioactive decay; spots, also created by radioactivity, but at lower energies; and noise, which is a catch-all for nonparticle images created by thermal noise or other electronic artifacts. Example images from the first three categories can be seen below. The full DECO data set is available on the project website to enable scientific and educational analyses.

Previously, particle identification had been a major hurdle in developing collaborative and individual research projects. While it is straightforward for humans to identify DECO images with little concentrated effort, it has proven difficult for computers using traditional algorithms. Earlier work by Cassidy Schneider, an undergraduate researcher at UW–Madison, showed that it was possible to identify spots and many tracks and worms with geometric variables, but that some tracks and worms are difficult to distinguish. Building on intuition developed from her work, the DECO team turned to the rapidly advancing field of machine learning, which features data-driven algorithms such as convolutional neural networks.

Convolutional neural networks have shown remarkable performance in image recognition tasks such as radiological image analysis and face recognition. In this work, a CNN was taught to recognize the characteristic patterns of the four different types of DECO images. In order for the CNN to learn these patterns, the DECO team first compiled a database of roughly 5,000 images, each labeled as track, worm, spot, or noise. This initial set was classified by human visual inspection, a painstaking effort that motivates the need for automated particle identification.

The 5,000 labeled images were later used as the training data set: they were used to teach the CNN to recognize patterns. The CNN consists of a set of learnable, pattern-detecting filters that that are applied in successive layers: the first set is applied to the input images, then the output is fed into the second layer, which has its own set of filters. This structure continues for many layers, the output of each being used as the input for the next. Filters in the early layers typically learn simple patterns in an image such as edges or intensity fluctuations from pixel to pixel, while filters in the successive layers combine this information to learn more complicated patterns, such as curves and shapes. In the final layer, the patterns are mapped to one of four event types: track, worm, spot, or noise.

This architecture of nested layers is the hallmark of deep neural networks, which have exploded in popularity in recent years due to advancements in computing power and their remarkable ability to identify complex patterns in data. However, in order for the network to learn how to make accurate predictions, its filters need to learn which patterns are meaningful for identifying each event. For this reason, images from the training data set are fed through the network with a random set of filters and assigned event types. The error between the human-assigned label and the computer-assigned label is computed and the CNN filters are adjusted to minimize this error. Ideally, after many iterations of training, the error between the human-assigned and computer-assigned labels is very small, i.e., the network accurately identifies the event types.

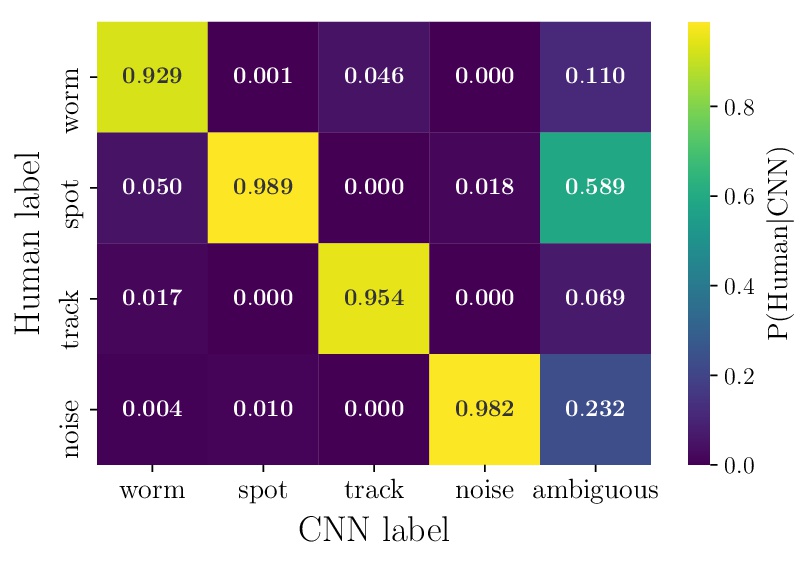

Once the CNN is trained, the algorithm is ready to process new, unseen images and estimate the probability that a given image belongs to each of the four event types. In order to measure the accuracy of the algorithm, researcher James Bourbeau explained that “we compared the CNN event type predictions to the known human labels to determine how accurate the model predictions are. Overall, the predicted event type is expected to be correct greater than 90% of the time. In particular, the CNN predictions for events classified as tracks are about 95% accurate.” A comparison of the CNN’s performance compared to human performance, called a confusion matrix, can be seen below.

The results of the CNN classification are published to the DECO data page within two hours of detection so that DECO users can quickly see whether they have detected a cosmic-ray muon. Accurate particle identification opens the door for more sophisticated DECO data analysis in the future. For example, it may be possible to measure air showers created by very high energy cosmic rays and gamma rays.

This is the first time that efficient, automated particle identification has been demonstrated using camera image sensors. Such identification is necessary in order separate cosmic rays from events produced by local radioactivity. Furthermore, it may be possible to measure the east-west effect using cosmic-ray muons in a classroom setting. This is the famous measurement that originally demonstrated that cosmic rays are positively charged.

The DECO team hopes that this will be an enjoyable new feature for users as well as a helpful aid in scientific pursuits. The results of this project have been published in the journal Astroparticle Physics, “Particle Identification in Camera Image Sensors using Computer Vision,” Volume 104, January 2019, 42.53. The article and its preprint can be found here and here. The DECO application is free and open to the public. Please visit DECO for more information or to become involved.